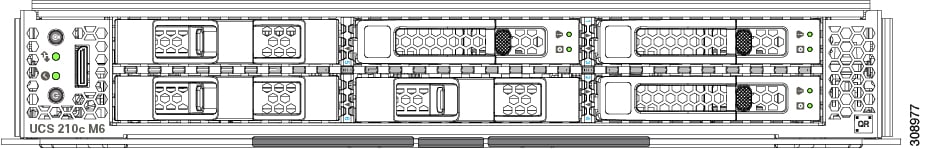

Cisco UCS X210c M6 Compute Node Overview

The Cisco UCS X210c M6 is a single-slot compute node that supports two CPU sockets for Intel 3rd Generation Xeon CPUs. The compute node supports the following features with one or two identical processors installed:

-

32 DDR4 DIMMs (16 DIIMMs per CPU)

-

One front mezzanine module which can support any of the following:

-

A front storage module, which supports multiple different storage device configurations:

-

Up to six SAS/SATA RAID-compatible drives connected over PCIe with the rest of the compute node. RAID levels 0, 1, 5, 6, 10, and 50 are supported.

-

Up to six NVMe drives.

-

A mixture of up to six SATA/SATA or NVMe drives is supported.

-

-

A GPU-based mixed compute and storage module. For more information, see Optional Hardware Configurations.

-

-

Connection with a paired UCS PCIe module, such as the Cisco UCS X440p PCIe node, to support GPU offload and acceleration. For more information, see Optional Hardware Configurations.

-

1 modular LAN on motherboard (mLOM/VIC) module supporting a maximum of 200G traffic, 100G to each fabric. For more information, see mLOM and Rear Mezzanine Slot Support.

-

1 rear mezzanine module (UCSX-V4-PCIME or UCSX-V4-25QGME) that provides connection between PCIe nodes (such as the Cisco UCS X440p PCIe node) peer compute nodes to support GPU offload and acceleration, For more information, see mLOM and Rear Mezzanine Slot Support.

-

A mini-storage module socket for one M.2 module with slots for two M.2 drives.

-

Up to 8 UCS X210c M6 compute nodes can be installed in a Cisco UCS X9508 modular system.

Compute Node Front Panel

The Cisco UCS X210c M6 front panel contains system LEDs that provide visual indicators for how the overall compute node is operating. An external connector is also supported.

Compute Node Front Panel

|

1 |

Power LED and Power Switch The LED provides a visual indicator about whether the compute node is on or off.

The switch is a push button that can power off or power on the compute node. See Front Panel Buttons. |

2 |

System Activity LED The LED blinks to show whether data or network traffic is written to or read from the compute node. If no traffic is detected, the LED is dark. The LED is updated every 10 seconds. |

|

3 |

System Health LED A multifunction LED that indicates the state of the compute node.

|

4 |

Locator LED/Switch The LED provides a visual indicator that glows solid blue to identify a specific drive. The switch is a push button that toggles the Indicator LED on or off. See Front Panel Buttons. |

|

5 |

External Optical Connector (Oculink) that supports local console functionality. See Local Console. |

Front Panel Buttons

The front panel has some buttons that are also LEDs. See Compute Node Front Panel.

-

The front panel Power button is a multi-function button controls system power for the compute node.

-

Immediate power up: Quickly pressing and releasing the button, but not holding it down, causes a powered down compute node to power up.

-

Immediate power down: Pressing the button and holding it down 7 seconds or longer before releasing it causes a powered-up compute node to immediately power down.

-

Graceful power down: Quickly pressing and releasing the button, but not holding it down, causes a powered-up compute node to power down in an orderly fashion.

-

-

The front panel Locator button is a toggle that controls the Locator LED. Quickly pressing the button, but not holding it down, toggles the locator LED on (when it glows a steady blue) or off (when it is dark). The LED can also be dark if the compute node is not receiving power.

For more information, see Interpreting LEDs.

Drive Bays

Each Cisco UCS X210c M6 compute node has a front mezzanine slot that can support local storage drives of different types and quantities of 2.5-inch SAS, SATA, or NVMe drives. A drive blank panel (UCSC-BBLKD-S2) must cover all empty drive bays.

Drive Front Panels

The front drives are installed in the front mezzanine slot of the compute node. SAS/SATA and NVMe drives are supported.

Compute Node Front Panel with SAS/SATA Drives

The compute node front panel contains the front mezzanine module, which can support a maximum of 6 SAS/SATA drives. The drives have additional LEDs that provide visual indicators about each drive's status.

|

1 |

Drive Health LED |

2 |

Drive Activity LED |

Compute Node Front Panel with NVMe Drives

The compute node front panel contains the front mezzanine module, which can support a maximum of six 2.5-inch NVMe drives.

Local Console

The local console connector is a horizontal oriented OcuLink on the compute node faceplate.

The connector allows a direct connection to a compute node to allow operating system installation directly rather than remotely.

The connector terminates to a KVM dongle cable (UCSX-C-DEBUGCBL) that provides a connection into a Cisco UCS compute node. The cable provides connection to the following:

-

VGA connector for a monitor

-

Host Serial Port

-

USB port connector for a keyboard and mouse

With this cable, you can create a direct connection to the operating system and the BIOS running on a compute node. A KVM cable can be ordered in separately and it doesn’t come with compute node’s accessary kit.

|

1 |

Oculink connector to compute node |

2 |

Host Serial Port |

|

3 |

USB connector to connect to single USB 3.0 port (keyboard or mouse) |

4 |

VGA connector for a monitor |

mLOM and Rear Mezzanine Slot Support

The following rear mezzanine and modular LAN on motherboard (mLOM) modules are supported.

-

Cisco UCS VIC 15422 (UCSX-ME-V5Q50G) which supports:

-

Four 25G KR interfaces.

-

Can occupy the server's mezzanine slot at the bottom rear of the chassis.

-

An included bridge card extends this VIC's 2x 50 Gbps of network connections through IFM connectors, bringing the total bandwidth to 100 Gbps per fabric (for a total of 200 Gbps per server).

-

-

Cisco UCS VIC 15420 mLOM (UCSX- ML-V5Q50G) which supports:

-

Quad-Port 25G mLOM.

-

Occupies the server's modular LAN on motherboard (mLOM) slot.

-

Enables up to 50 Gbps of unified fabric connectivity to each of the chassis intelligent fabric modules (IFMs) for 100 Gbps connectivity per server.

-

-

Cisco UCS VIC 15231 mLOM (UCSX-ML-V5D200G), which supports:

-

x16 PCIE Gen 4 host interface to UCS X210c M6 compute node

-

4GB DDR4 DIMM, 3200MHz with ECC

-

Two or four KR interfaces that connect to Cisco UCS X Series Intelligent Fabric Modules (IFMs):

-

Two 100G KR interfaces connecting to the UCSX 100G Intelligent Fabric Module (UCSX-I-9108-100G)

-

Four 25G KR interfaces connecting to the Cisco UCSX 9108 25G Intelligent Fabric Module (UCSX-I-9108-25G)

-

-

-

Cisco UCS VIC 15230 mLOM (UCSX-ML-V5D200GV2), which supports:

-

x16 PCIE Gen 4 host interface to UCS X210c M6 compute node

-

4GB DDR4 DIMM, 3200MHz with ECC

-

Two or four KR interfaces that connect to Cisco UCS X Series Intelligent Fabric Modules (IFMs):

-

Two 100G KR interfaces connecting to the UCSX 100G Intelligent Fabric Module (UCSX-I-9108-100G)

-

Four 25G KR interfaces connecting to the Cisco UCSX 9108 25G Intelligent Fabric Module (UCSX-I-9108-25G)

-

-

Secure boot support

-

-

UCS VIC 14425 4x25G mLOM for X Compute Node (UCSX-V4-Q25GML)

-

X16 PCIe Gen 3 host connection to the compute node

-

2GB DDR3 DIMMs, 1866 MHz

-

Four 25G KR interfaces that can connect to the Cisco UCSX 9108 25G Intelligent Fabric Module (UCSX-I-9108-25G)

-

Four 25G KR interfaces that can connect to the Cisco UCSX 9108 100G Intelligent Fabric Module (UCSX-I-9108-100G)

-

The following modular network mezzanine cards are supported.

Note |

The UCS VIC 14000 bridge connector (UCSX-V4-BRIDGE) is required with the mezzanine card to connect the UCS X-Series compute nodes to Cisco UCS X Series IFMs. Also, the UCS VIC 15231 mLOM and UCS VIC 14825 rear mezzanine card are not supported together in the same server. |

-

UCS VIC 14825 4x25G Mezzanine Card for X Compute Node (UCSX-V4-Q25GME)

-

a X16 PCIe Gen 3 host connection to the compute node

-

2GB DDR3 DIMMs, 1866 MHz

-

Four 25G KR interfaces that can connect to the Cisco UCSX 9108 25G Intelligent Fabric Module (UCSX-I-9108-25G)

-

Four 25G KR interfaces that can connect to the Cisco UCSX 9108 100G Intelligent Fabric Module (UCSX-I-9108-100G)

-

Features an embedded VIC that bridges to the mLOM card

-

Supports connectivity for Cisco UCS PCIe Nodes, such as the Cisco UCS X440p PCIe Node

-

-

Cisco UCS PCI Mezz card for X-Fabric (UCSX-V4-PCIME) provides connectivity for Cisco UCS PCIe Nodes, such as the Cisco UCS X440p PCIe Node, which supports GPU offload and acceleration when a compute node is paired with the PCIe node.

The UCSX-V4-PCIME or UCSX-V4-Q25GME is required when a compute node is paired with a PCIe node. For more information, see Optional Hardware Configurations.

System Health States

The compute node's front panel has a System Health LED, which is a visual indicator that shows whether the compute node is operating in a normal runtime state (the LED glows steady green). If the System Health LED shows anything other than solid green, the compute node is not operating normally, and it requires attention.

The following System Health LED states indicate that the compute node is not operating normally.

|

System Health LED Color |

Compute Node State |

Conditions |

|---|---|---|

|

Solid Amber |

Degraded |

|

|

Blinking Amber |

Critical |

|

Interpreting LEDs

|

LED |

Color |

Description |

|---|---|---|

|

Compute Node Power (callout 1 on the Chassis Front Panel)

|

Off |

Power off. |

|

Green |

Normal operation. |

|

|

Amber |

Standby. |

|

|

Compute Node Activity (callout 2 on the Chassis Front Panel) |

Off |

None of the network links are up. |

|

Green |

At least one network link is up. |

|

|

Compute Node Health (callout 3 on the Chassis Front Panel) |

Off |

Power off. |

|

Green |

Normal operation. |

|

|

Amber |

Degraded operation. |

|

|

Blinking Amber |

Critical error. |

|

|

Compute Node Locator LED and button (callout 4 on the Chassis Front Panel)

|

Off |

Locator not enabled. |

|

Blinking Blue 1 Hz |

Locates a selected compute node—If the LED is not blinking, the compute node is not selected. You can initiate the LED in UCS Intersight or by pressing the button, which toggles the LED on and off. |

|

LED |

Color |

Description |

|---|---|---|

|

Drive Activity |

Off |

Inactive. |

|

Green |

Green ON for presence and Green Flashing for I/O activity. |

|

|

Drive Health |

Off |

No fault detected, the drive is not installed, or it is not receiving power. |

|

Amber |

Fault detected |

|

|

Flashing Amber 4 Hz |

Rebuild drive active. If the Drive Activity LED is also flashing amber, a drive rebuild is in progress. |

Optional Hardware Configurations

The Cisco UCS X210c M6 compute node can be installed in a Cisco UCS X9508 Server Chassis either as a standlone compute node or with the following optional hardware configurations.

Cisco UCS X10c Front Mezzanine GPU Module

As an option, the compute node can support a GPU-based front mezzanine module, the Cisco UCS X10c Front Mezzanine GPU Module.

Each GPU front mezzanine module contains:

-

A GPU adapter card supporting zero, one or two, Cisco T4 GPUs (UCSX-GPU-T4-MEZZ).

Each GPU is connected directly into the GPU adapter card by a x8 Gen 4 PCI connection.

-

A storage adapter and riser card supporting zero, one, or two U.2 NVMe drives. NVMe RAID is supported through Intel VROC key.

For information about the optional GPU front mezzanine module, see the Cisco UCS X10c Front Mezzanine GPU Module Installation and Service Guide.

Cisco UCS X440p PCIe Node

As an option, the compute node can be paired with a full-slot GPU acceleration hardware module in the Cisco UCS X9508 Server Chassis. This option is supported through the Cisco X440p PCIe node. For information about this option, see Cisco UCS X440p PCIe Node Installation and Service Guide.

Note |

When the compute node is paired with the Cisco UCS X440p PCIe node, the Cisco UCS PCI Mezz card for X-Fabric Connectivity (UCSX-V4-PCIME or UCSX-V4-Q25GME) is required. These rear mezzanine cards install on the compute node. |

Note |

For a full-slot Cisco A100-80 GPU (UCSC-GPU-A100-80), firmware version 4.2(2) is the minimum version to support the GPU. |

Feedback

Feedback